Difference between revisions of "AI: Anomaly Detection in logfiles"

| (20 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

== Summary == | == Summary == | ||

| Line 10: | Line 8: | ||

* Packages: TensorFlow, Keras, Pandas, sklearn, numpy, seaborn, matplotlib | * Packages: TensorFlow, Keras, Pandas, sklearn, numpy, seaborn, matplotlib | ||

* Software: Pycharm or any other python editor | * Software: Pycharm or any other python editor | ||

* Dataset: We used the following [https://www.unb.ca/cic/datasets/ids-2018.html Dataset] | |||

== Description == | == Description == | ||

=== Step | === Step 0 - Import the needed packages/libraries === | ||

First we need to create a sequential model, which can be trained later | from keras.callbacks import EarlyStopping, ModelCheckpoint # for training | ||

from keras.models import Sequential, load_model # for model | |||

from keras.layers import Dense, Activation # layers and activation function | |||

import pandas as pd # read/prep dataset | |||

pd.options.mode.chained_assignment = None # removes warning | |||

import numpy as np # read/prep dataset | |||

import sklearn.model_selection as sk # dataset splitting | |||

import tensorflow as tf # for model | |||

import seaborn as sns # plotting | |||

from sklearn.metrics import confusion_matrix # confusion matrix | |||

from matplotlib import pyplot as plt # plotting | |||

=== Step 1 - Read the dataset === | |||

First we need to read the data, for that we can use the predefined function from pandas 'read_csv' | |||

logfile_features = pd.read_csv(path) | |||

Afterwards we replace the infinite values with nans and drop them all together | |||

logfile_features.replace([np.inf, -np.inf], np.nan, inplace=True) | |||

logfile_features.dropna(inplace=True) | |||

Our dataset has labels which define if its an attack or not, so we replace them with numericals (0 and 1) | |||

In our case Benign is for standard user traffic and DoS attacks-Slowloris and DoS attacks-GoldenEye is for traffic where a DoS attack occured | |||

logfile_features["Label"].replace({"Benign": 0, "DoS attacks-Slowloris": 1, "DoS attacks-GoldenEye": 1}, inplace=True) | |||

Next we shuffle our dataset | |||

logfile_features = logfile_features.sample(frac=1) | |||

Now we need to split our data into 3 parts: Training data (60%), Test data (20%) and Validation data (20%). | |||

To do that we use the following methods | |||

train_dataset, temp_test_dataset = sk.train_test_split(logfile_features, test_size=0.4) | |||

test_dataset, valid_dataset = sk.train_test_split(temp_test_dataset, test_size=0.5) | |||

Next we extract the labels from the actual dataset, we need them extra for our training | |||

train_labels = train_dataset.pop('Label') | |||

test_labels = test_dataset.pop('Label') | |||

valid_labels = valid_dataset.pop('Label') | |||

To norm our data correctly we need to get the stats of our training data (mean and standard deviation) | |||

train_stats = train_dataset.describe() | |||

train_stats = train_stats.transpose() | |||

For norming we use a following method | |||

def norm(x, stats): | |||

return (x - stats['mean']) / stats['std'] | |||

This method can then be used the following way | |||

normed_train_data = norm(train_dataset, train_stats) | |||

normed_test_data = norm(test_dataset, train_stats) | |||

normed_valid_dataset = norm(valid_dataset, train_stats) | |||

=== Step 2 - Create a model === | |||

First we need to create a sequential model, which can be trained later | |||

model = Sequential() | model = Sequential() | ||

The next step is to create an input layer | The next step is to create an input layer with exactly as many nodes as features in our training data | ||

model.add(Dense( | model.add(Dense(normed_train_data.shape[1],input_shape=(normed_train_data.shape[1],)) | ||

Next a hidden layer consisting of 128 nodes with the ReLU (Rectified Linear Unit) activation function | Next a hidden layer consisting of 128 nodes with the ReLU (Rectified Linear Unit) activation function | ||

model.add(Dense(128, Activation('relu'))) | model.add(Dense(128, Activation('relu'))) | ||

| Line 29: | Line 78: | ||

Now we could change the learning rate to a specific value, but we just leave it at the default 0.001 | Now we could change the learning rate to a specific value, but we just leave it at the default 0.001 | ||

learning_rate = 0.001 | learning_rate = 0.001 | ||

For the optimizer we just use the Adam Optimizer with the pre-defined learning rate | For the optimizer we just use the Adam Optimizer with the pre-defined learning rate | ||

optimizer = tf.optimizers.Adam(learning_rate) | optimizer = tf.optimizers.Adam(learning_rate) | ||

Lastly we need to compile the model, for the loss function we use | Lastly we need to compile the model, for the loss function we use BinaryCrossentropy, our optimizer and the metric should be the accuarcy of the model | ||

model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True), | model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True), | ||

optimizer=optimizer, | optimizer=optimizer, | ||

metrics=['accuracy']) | metrics=['accuracy']) | ||

=== Step 3 - Train the model === | |||

First we set our epochs, a complete pass of the normed training data through the model, and our batch size, after how many datapoints the model gets updated | |||

EPOCHS = 5000 | |||

batch_size = 1024 | |||

To not have to wait for 5000 training epochs to finish and to prevent overfitting we can set an early stop | |||

es = EarlyStopping(monitor='val_loss', mode='min', verbose=1, patience=2) | |||

=== | Finally the training, model fitting, can start | ||

with tf.device('/CPU:0'): | |||

# with tf.device('/GPU:0'): # wenn man mit der Grafikkarte trainieren will | |||

history = model.fit( | |||

normed_train_data, | |||

train_labels, | |||

batch_size=batch_size, | |||

epochs=EPOCHS, | |||

verbose=1, | |||

shuffle=True, | |||

steps_per_epoch=int(normed_train_data.shape[0] / batch_size), | |||

validation_data=(normed_valid_dataset, valid_labels), callbacks=[es], | |||

) | |||

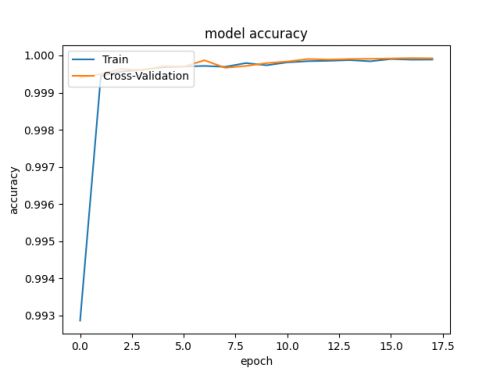

=== Step 4 - Plot the results === | |||

After the training has been completed you can easily plot the accuarcy and validation accuracy during the training using | |||

plt.plot(history.history['accuracy']) | |||

plt.plot(history.history['val_accuracy']) | |||

plt.title('model accuracy') | |||

plt.ylabel('accuracy') | |||

plt.xlabel('epoch') | |||

plt.legend(['Train', 'Cross-Validation'], loc='upper left') | |||

plt.show() | |||

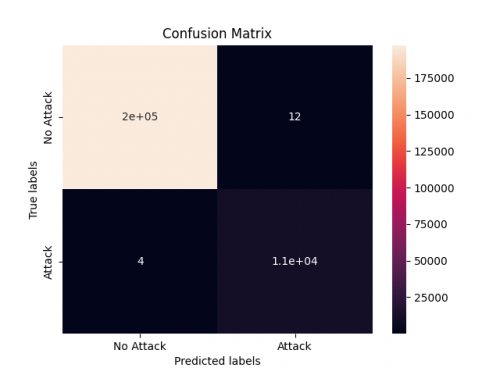

To show the accuarcy when tested against the test data, meaning data the model didn't train with, we can use a confusion matrix the following | |||

ax = plt.subplot() | |||

predict_results = model.predict(normed_test_data) | |||

predict_results = (predict_results > 0.5) | |||

cm = confusion_matrix(test_labels, predict_results) | |||

ax.set_xlabel('Predicted labels') | |||

ax.set_ylabel('True labels') | |||

ax.set_title('Confusion Matrix') | |||

ax.xaxis.set_ticklabels(['No Attack', 'Attack']) | |||

ax.yaxis.set_ticklabels(['No Attack', 'Attack']) | |||

plt.show() | |||

[[File:AI_model_accuracy.png|500px|Model Accuracy]] | |||

[[File:AI_confusion_matrix.png|500px|Confusion Matrix]] | |||

== Used Hardware == | == Used Hardware == | ||

* [[Jetson AGX Xavier Development Kit]] | * [[Jetson AGX Xavier Development Kit]] | ||

== References == | |||

* Dataset https://www.unb.ca/cic/datasets/ids-2018.html | |||

* learndatasci Guide https://www.learndatasci.com/glossary/binary-classification/ | |||

* Binary Classification with keras Guide https://machinelearningmastery.com/binary-classification-tutorial-with-the-keras-deep-learning-library/ | |||

[[Category:Documentation]] | [[Category:Documentation]] | ||

Latest revision as of 15:46, 27 July 2022

Summary

This guide will create a basic AI model to perform binary classification in order to detect anomalies in logfiles. This AI model is also suitable for the Jetson AGX Xavier Development Kit

Requirements

- Packages: TensorFlow, Keras, Pandas, sklearn, numpy, seaborn, matplotlib

- Software: Pycharm or any other python editor

- Dataset: We used the following Dataset

Description

Step 0 - Import the needed packages/libraries

from keras.callbacks import EarlyStopping, ModelCheckpoint # for training from keras.models import Sequential, load_model # for model from keras.layers import Dense, Activation # layers and activation function import pandas as pd # read/prep dataset pd.options.mode.chained_assignment = None # removes warning import numpy as np # read/prep dataset import sklearn.model_selection as sk # dataset splitting import tensorflow as tf # for model import seaborn as sns # plotting from sklearn.metrics import confusion_matrix # confusion matrix from matplotlib import pyplot as plt # plotting

Step 1 - Read the dataset

First we need to read the data, for that we can use the predefined function from pandas 'read_csv'

logfile_features = pd.read_csv(path)

Afterwards we replace the infinite values with nans and drop them all together

logfile_features.replace([np.inf, -np.inf], np.nan, inplace=True) logfile_features.dropna(inplace=True)

Our dataset has labels which define if its an attack or not, so we replace them with numericals (0 and 1)

In our case Benign is for standard user traffic and DoS attacks-Slowloris and DoS attacks-GoldenEye is for traffic where a DoS attack occured

logfile_features["Label"].replace({"Benign": 0, "DoS attacks-Slowloris": 1, "DoS attacks-GoldenEye": 1}, inplace=True)

Next we shuffle our dataset

logfile_features = logfile_features.sample(frac=1)

Now we need to split our data into 3 parts: Training data (60%), Test data (20%) and Validation data (20%). To do that we use the following methods

train_dataset, temp_test_dataset = sk.train_test_split(logfile_features, test_size=0.4) test_dataset, valid_dataset = sk.train_test_split(temp_test_dataset, test_size=0.5)

Next we extract the labels from the actual dataset, we need them extra for our training

train_labels = train_dataset.pop('Label')

test_labels = test_dataset.pop('Label')

valid_labels = valid_dataset.pop('Label')

To norm our data correctly we need to get the stats of our training data (mean and standard deviation)

train_stats = train_dataset.describe() train_stats = train_stats.transpose()

For norming we use a following method

def norm(x, stats):

return (x - stats['mean']) / stats['std']

This method can then be used the following way

normed_train_data = norm(train_dataset, train_stats) normed_test_data = norm(test_dataset, train_stats) normed_valid_dataset = norm(valid_dataset, train_stats)

Step 2 - Create a model

First we need to create a sequential model, which can be trained later

model = Sequential()

The next step is to create an input layer with exactly as many nodes as features in our training data

model.add(Dense(normed_train_data.shape[1],input_shape=(normed_train_data.shape[1],))

Next a hidden layer consisting of 128 nodes with the ReLU (Rectified Linear Unit) activation function

model.add(Dense(128, Activation('relu')))

And finally the output layer consisting of 1 node which represents 'attack' or 'no attack'

model.add(Dense(1))

Now we could change the learning rate to a specific value, but we just leave it at the default 0.001

learning_rate = 0.001

For the optimizer we just use the Adam Optimizer with the pre-defined learning rate

optimizer = tf.optimizers.Adam(learning_rate)

Lastly we need to compile the model, for the loss function we use BinaryCrossentropy, our optimizer and the metric should be the accuarcy of the model

model.compile(loss=tf.keras.losses.BinaryCrossentropy(from_logits=True), optimizer=optimizer, metrics=['accuracy'])

Step 3 - Train the model

First we set our epochs, a complete pass of the normed training data through the model, and our batch size, after how many datapoints the model gets updated

EPOCHS = 5000 batch_size = 1024

To not have to wait for 5000 training epochs to finish and to prevent overfitting we can set an early stop

es = EarlyStopping(monitor='val_loss', mode='min', verbose=1, patience=2)

Finally the training, model fitting, can start

with tf.device('/CPU:0'):

# with tf.device('/GPU:0'): # wenn man mit der Grafikkarte trainieren will

history = model.fit(

normed_train_data,

train_labels,

batch_size=batch_size,

epochs=EPOCHS,

verbose=1,

shuffle=True,

steps_per_epoch=int(normed_train_data.shape[0] / batch_size),

validation_data=(normed_valid_dataset, valid_labels), callbacks=[es],

)

Step 4 - Plot the results

After the training has been completed you can easily plot the accuarcy and validation accuracy during the training using

plt.plot(history.history['accuracy'])

plt.plot(history.history['val_accuracy'])

plt.title('model accuracy')

plt.ylabel('accuracy')

plt.xlabel('epoch')

plt.legend(['Train', 'Cross-Validation'], loc='upper left')

plt.show()

To show the accuarcy when tested against the test data, meaning data the model didn't train with, we can use a confusion matrix the following

ax = plt.subplot()

predict_results = model.predict(normed_test_data)

predict_results = (predict_results > 0.5)

cm = confusion_matrix(test_labels, predict_results)

ax.set_xlabel('Predicted labels')

ax.set_ylabel('True labels')

ax.set_title('Confusion Matrix')

ax.xaxis.set_ticklabels(['No Attack', 'Attack'])

ax.yaxis.set_ticklabels(['No Attack', 'Attack'])

plt.show()

Used Hardware

References

- Dataset https://www.unb.ca/cic/datasets/ids-2018.html

- learndatasci Guide https://www.learndatasci.com/glossary/binary-classification/

- Binary Classification with keras Guide https://machinelearningmastery.com/binary-classification-tutorial-with-the-keras-deep-learning-library/